This PR completes the conversion of non-interactive `codex exec` to use

app server rather than directly using core events and methods.

### Summary

- move `codex-exec` off exec-owned `AuthManager` and `ThreadManager`

state

- route exec bootstrap, resume, and auth refresh through existing

app-server paths

- replace legacy `codex/event/*` decoding in exec with typed app-server

notification handling

- update human and JSONL exec output adapters to translate existing

app-server notifications only

- clean up "app server client" layer by eliminating support for legacy

notifications; this is no longer needed

- remove exposure of `authManager` and `threadManager` from "app server

client" layer

### Testing

- `exec` has pretty extensive unit and integration tests already, and

these all pass

- In addition, I asked Codex to put together a comprehensive manual set

of tests to cover all of the `codex exec` functionality (including

command-line options), and it successfully generated and ran these tests

## Summary

- move the pure sandbox policy transform helpers from `codex-core` into

`codex-sandboxing`

- move the corresponding unit tests with the extracted implementation

- update `core` and `app-server` callers to import the moved APIs

directly, without re-exports or proxy methods

## Testing

- cargo test -p codex-sandboxing

- cargo test -p codex-core sandboxing

- cargo test -p codex-app-server --lib

- just fix -p codex-sandboxing

- just fix -p codex-core

- just fix -p codex-app-server

- just fmt

- just argument-comment-lint

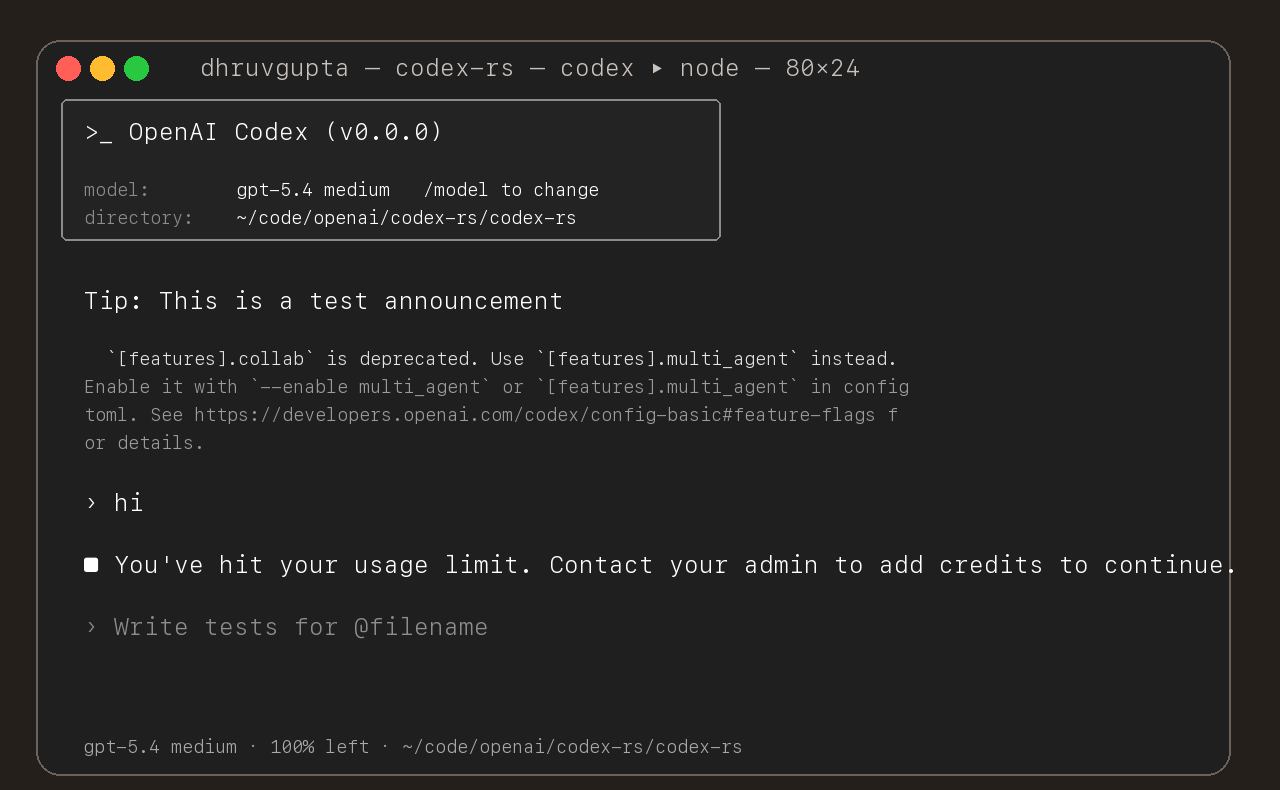

## Summary

- update the self-serve business usage-based limit message to direct

users to their admin for additional credits

- add a focused unit test for the self_serve_business_usage_based plan

branch

Added also:

If you are at a rate limit but you still have credits, codex cli would

tell you to switch the model. We shouldnt do this if you have credits so

fixed this.

## Test

- launched the source-built CLI and verified the updated message is

shown for the self-serve business usage-based plan

## Summary

- add `ForkSnapshotMode` to `ThreadManager::fork_thread` so callers can

request either a committed snapshot or an interrupted snapshot

- share the model-visible `<turn_aborted>` history marker between the

live interrupt path and interrupted forks

- update the small set of direct fork callsites to pass

`ForkSnapshotMode::Committed`

Note: this enables /btw to work similarly as Esc to interrupt (hopefully

somewhat in distribution)

---------

Co-authored-by: Codex <noreply@openai.com>

## Summary

- queue input after the user submits `/compact` until that manual

compact turn ends

- mirror the same behavior in the app-server TUI

- add regressions for input queued before compact starts and while it is

running

Co-authored-by: Codex <noreply@openai.com>

## Why

Fixes [#15283](https://github.com/openai/codex/issues/15283), where

sandboxed tool calls fail on older distro `bubblewrap` builds because

`/usr/bin/bwrap` does not understand `--argv0`. The upstream [bubblewrap

v0.9.0 release

notes](https://github.com/containers/bubblewrap/releases/tag/v0.9.0)

explicitly call out `Add --argv0`. Flipping `use_legacy_landlock`

globally works around that compatibility bug, but it also weakens the

default Linux sandbox and breaks proxy-routed and split-policy cases

called out in review.

The follow-up Linux CI failure was in the new launcher test rather than

the launcher logic: the fake `bwrap` helper stayed open for writing, so

Linux would not exec it. This update also closes the user-visibility gap

from review by surfacing the same startup warning when `/usr/bin/bwrap`

is present but too old for `--argv0`, not only when it is missing.

## What Changed

- keep `use_legacy_landlock` default-disabled

- teach `codex-rs/linux-sandbox/src/launcher.rs` to fall back to the

vendored bubblewrap build when `/usr/bin/bwrap` does not advertise

`--argv0` support

- add launcher tests for supported, unsupported, and missing system

`bwrap`

- write the fake `bwrap` test helper to a closed temp path so the

supported-path launcher test works on Linux too

- extend the startup warning path so Codex warns when `/usr/bin/bwrap`

is missing or too old to support `--argv0`

- mirror the warning/fallback wording across

`codex-rs/linux-sandbox/README.md` and `codex-rs/core/README.md`,

including that the fallback is the vendored bubblewrap compiled into the

binary

- cite the upstream `bubblewrap` release that introduced `--argv0`

## Verification

- `bazel test --config=remote --platforms=//:rbe

//codex-rs/linux-sandbox:linux-sandbox-unit-tests

--test_filter=launcher::tests::prefers_system_bwrap_when_help_lists_argv0

--test_output=errors`

- `cargo test -p codex-core system_bwrap_warning`

- `cargo check -p codex-exec -p codex-tui -p codex-tui-app-server -p

codex-app-server`

- `just argument-comment-lint`

- emit a typed `thread/realtime/transcriptUpdated` notification from

live realtime transcript deltas

- expose that notification as flat `threadId`, `role`, and `text` fields

instead of a nested transcript array

- continue forwarding raw `handoff_request` items on

`thread/realtime/itemAdded`, including the accumulated

`active_transcript`

- update app-server docs, tests, and generated protocol schema artifacts

to match the delta-based payloads

---------

Co-authored-by: Codex <noreply@openai.com>

This PR add an URI-based system to reference agents within a tree. This

comes from a sync between research and engineering.

The main agent (the one manually spawned by a user) is always called

`/root`. Any sub-agent spawned by it will be `/root/agent_1` for example

where `agent_1` is chosen by the model.

Any agent can contact any agents using the path.

Paths can be used either in absolute or relative to the calling agents

Resume is not supported for now on this new path

## Summary

- make app-server treat `clientInfo.name == "codex-tui"` as a legacy

compatibility case

- fall back to `DEFAULT_ORIGINATOR` instead of sending `codex-tui` as

the originator header

- add a TODO noting this is a temporary workaround that should be

removed later

## Testing

- Not run (not requested)

- Split the feature system into a new `codex-features` crate.

- Cut `codex-core` and workspace consumers over to the new config and

warning APIs.

Co-authored-by: Ahmed Ibrahim <219906144+aibrahim-oai@users.noreply.github.com>

Co-authored-by: Codex <noreply@openai.com>

- Move the auth implementation and token data into codex-login.

- Keep codex-core re-exporting that surface from codex-login for

existing callers.

---------

Co-authored-by: Codex <noreply@openai.com>

For each feature we have:

1. Trait exposed on environment

2. **Local Implementation** of the trait

3. Remote implementation that uses the client to proxy via network

4. Handler implementation that handles PRC requests and calls into

**Local Implementation**

## Summary

Persist Stop-hook continuation prompts as `user` messages instead of

hidden `developer` messages + some requested integration tests

This is a followup to @pakrym 's comment in

https://github.com/openai/codex/pull/14532 to make sure stop-block

continuation prompts match training for turn loops

- Stop continuation now writes `<hook_prompt hook_run_id="...">stop

hook's user prompt<hook_prompt>`

- Introduces quick-xml dependency, though we already indirectly depended

on it anyway via syntect

- This PR only has about 500 lines of actual logic changes, the rest is

tests/schema

## Testing

Example run (with a sessionstart hook and 3 stop hooks) - this shows

context added by session start, then two stop hooks sending their own

additional prompts in a new turn. The model responds with a single

message addressing both. Then when that turn ends, the hooks detect that

they just ran using `stop_hook_active` and decide not to infinite loop

test files for this (unzip, move codex -> .codex):

[codex.zip](https://github.com/user-attachments/files/26075806/codex.zip)

```

› cats

• Running SessionStart hook: lighting the observatory

SessionStart hook (completed)

warning: Hi, I'm a session start hook for wizard-tower (startup).

hook context: A wimboltine stonpet is an exotic cuisine from hyperspace

• Cats are tiny zen wizards, my friend: equal parts nap, mystery, and chaos. If you want, we can talk

cat facts, cat breeds, cat names, or build something cat-themed in this repo.

• Running Stop hook: checking the tower wards

• Running Stop hook: sacking the guards

• Running Stop hook: hiring the guards

Stop hook (completed)

warning: Wizard Tower Stop hook reviewed the completed reply (177 chars).

Stop hook (blocked)

warning: Wizard Tower Stop hook continuing conversation

feedback: cook the stonpet

Stop hook (blocked)

warning: Wizard Tower Stop hook continuing conversation

feedback: eat the cooked stonpet

• Stonpet’s cooked, aloha style: flash-seared over a blue quasiflame, glazed with nebula salt, and

rested until the hyperspace juices settle.

Now we eat with gratitude, my friend. One mindful bite in, and the flavor is pure cosmic surf:

smoky, bright, and totally out of this dimension.

• Running Stop hook: checking the tower wards

• Running Stop hook: sacking the guards

• Running Stop hook: hiring the guards

Stop hook (completed)

warning: Wizard Tower Stop hook reviewed the completed reply (285 chars).

Stop hook (completed)

warning: Wizard Tower Stop hook saw a second pass and stayed calm to avoid a loop.

Stop hook (completed)

warning: Wizard Tower Stop hook saw a second pass and stayed calm to avoid a loop.

```

The idea is that codex-exec exposes an Environment struct with services

on it. Each of those is a trait.

Depending on construction parameters passed to Environment they are

either backed by local or remote server but core doesn't see these

differences.

1. Added SessionSource::Custom(String) and --session-source.

2. Enforced plugin and skill products by session_source.

3. Applied the same filtering to curated background refresh.

This PR adds a new `thread/shellCommand` app server API so clients can

implement `!` shell commands. These commands are executed within the

sandbox, and the command text and output are visible to the model.

The internal implementation mirrors the current TUI `!` behavior.

- persist shell command execution as `CommandExecution` thread items,

including source and formatted output metadata

- bridge live and replayed app-server command execution events back into

the existing `tui_app_server` exec rendering path

This PR also wires `tui_app_server` to submit `!` commands through the

new API.

Resubmit https://github.com/openai/codex/pull/15020 with correct

content.

1. Use requirement-resolved config.features as the plugin gate.

2. Guard plugin/list, plugin/read, and related flows behind that gate.

3. Skip bad marketplace.json files instead of failing the whole list.

4. Simplify plugin state and caching.

## Summary

This PR makes `thread/resume` reuse persisted thread model metadata when

the caller does not explicitly override it.

Changes:

- read persisted thread metadata from SQLite during `thread/resume`

- reuse persisted `model` and `model_reasoning_effort` as resume-time

defaults

- fetch persisted metadata once and reuse it later in the resume

response path

- keep thread summary loading on the existing rollout path, while

reusing persisted metadata when available

- document the resume fallback behavior in the app-server README

## Why

Before this change, resuming a thread without explicit overrides derived

`model` and `model_reasoning_effort` from current config, which could

drift from the thread’s last persisted values. That meant a resumed

thread could report and run with different model settings than the ones

it previously used.

## Behavior

Precedence on `thread/resume` is now:

1. explicit resume overrides

2. persisted SQLite metadata for the thread

3. normal config resolution for the resumed cwd

1. Use requirement-resolved config.features as the plugin gate.

2. Guard plugin/list, plugin/read, and related flows behind that gate.

3. Skip bad marketplace.json files instead of failing the whole list.

4. Simplify plugin state and caching.

## Summary

- move `guardian_developer_instructions` from managed config into

workspace-managed `requirements.toml`

- have guardian continue using the override when present and otherwise

fall back to the bundled local guardian prompt

- keep the generalized prompt-quality improvements in the shared

guardian default prompt

- update requirements parsing, layering, schema, and tests for the new

source of truth

## Context

This replaces the earlier managed-config / MDM rollout plan.

The intended rollout path is workspace-managed requirements, including

cloud enterprise policies, rather than backend model metadata, Statsig,

or Jamf-managed config. That keeps the default/fallback behavior local

to `codex-rs` while allowing faster policy updates through the

enterprise requirements plane.

This is intentionally an admin-managed policy input, not a user

preference: the guardian prompt should come either from the bundled

`codex-rs` default or from enterprise-managed `requirements.toml`, and

normal user/project/session config should not override it.

## Updating The OpenAI Prompt

After this lands, the OpenAI-specific guardian prompt should be updated

through the workspace Policies UI at `/codex/settings/policies` rather

than through Jamf or codex-backend model metadata.

Operationally:

- open the workspace Policies editor as a Codex admin

- edit the default `requirements.toml` policy, or a higher-precedence

group-scoped override if we ever want different behavior for a subset of

users

- set `guardian_developer_instructions = """..."""` to the full

OpenAI-specific guardian prompt text

- save the policy; codex-backend stores the raw TOML and `codex-rs`

fetches the effective requirements file from `/wham/config/requirements`

When updating the OpenAI-specific prompt, keep it aligned with the

shared default guardian policy in `codex-rs` except for intentional

OpenAI-only additions.

## Testing

- `cargo check --tests -p codex-core -p codex-config -p

codex-cloud-requirements --message-format short`

- `cargo run -p codex-core --bin codex-write-config-schema`

- `cargo fmt`

- `git diff --check`

Co-authored-by: Codex <noreply@openai.com>

Adds an environment crate and environment + file system abstraction.

Environment is a combination of attributes and services specific to

environment the agent is connected to:

File system, process management, OS, default shell.

The goal is to move most of agent logic that assumes environment to work

through the environment abstraction.

- Add shared Product support to marketplace plugin policy and skill

policy (no enforced yet).

- Move marketplace installation/authentication under policy and model it

as MarketplacePluginPolicy.

- Rename plugin/marketplace local manifest types to separate raw serde

shapes from resolved in-memory models.

## Problem

Ubuntu/AppArmor hosts started failing in the default Linux sandbox path

after the switch to vendored/default bubblewrap in `0.115.0`.

The clearest report is in

[#14919](https://github.com/openai/codex/issues/14919), especially [this

investigation

comment](https://github.com/openai/codex/issues/14919#issuecomment-4076504751):

on affected Ubuntu systems, `/usr/bin/bwrap` works, but a copied or

vendored `bwrap` binary fails with errors like `bwrap: setting up uid

map: Permission denied` or `bwrap: loopback: Failed RTM_NEWADDR:

Operation not permitted`.

The root cause is Ubuntu's `/etc/apparmor.d/bwrap-userns-restrict`

profile, which grants `userns` access specifically to `/usr/bin/bwrap`.

Once Codex started using a vendored/internal bubblewrap path, that path

was no longer covered by the distro AppArmor exception, so sandbox

namespace setup could fail even when user namespaces were otherwise

enabled and `uidmap` was installed.

## What this PR changes

- prefer system `/usr/bin/bwrap` whenever it is available

- keep vendored bubblewrap as the fallback when `/usr/bin/bwrap` is

missing

- when `/usr/bin/bwrap` is missing, surface a Codex startup warning

through the app-server/TUI warning path instead of printing directly

from the sandbox helper with `eprintln!`

- use the same launcher decision for both the main sandbox execution

path and the `/proc` preflight path

- document the updated Linux bubblewrap behavior in the Linux sandbox

and core READMEs

## Why this fix

This still fixes the Ubuntu/AppArmor regression from

[#14919](https://github.com/openai/codex/issues/14919), but it keeps the

runtime rule simple and platform-agnostic: if the standard system

bubblewrap is installed, use it; otherwise fall back to the vendored

helper.

The warning now follows that same simple rule. If Codex cannot find

`/usr/bin/bwrap`, it tells the user that it is falling back to the

vendored helper, and it does so through the existing startup warning

plumbing that reaches the TUI and app-server instead of low-level

sandbox stderr.

## Testing

- `cargo test -p codex-linux-sandbox`

- `cargo test -p codex-app-server --lib`

- `cargo test -p codex-tui-app-server

tests::embedded_app_server_start_failure_is_returned`

- `cargo clippy -p codex-linux-sandbox --all-targets`

- `cargo clippy -p codex-app-server --all-targets`

- `cargo clippy -p codex-tui-app-server --all-targets`

- route realtime startup, input, and transport failures through a single

shutdown path

- emit one realtime error/closed lifecycle while clearing session state

once

---------

Co-authored-by: Codex <noreply@openai.com>

Co-authored-by: Ahmed Ibrahim <219906144+aibrahim-oai@users.noreply.github.com>

- thread the realtime version into conversation start and app-server

notifications

- keep playback-aware mic gating and playback interruption behavior on

v2 only, leaving v1 on the legacy path

## What is flaky

`codex-rs/app-server/tests/suite/fuzzy_file_search.rs` intermittently

loses the expected `fuzzyFileSearch/sessionUpdated` and

`fuzzyFileSearch/sessionCompleted` notifications when multiple

fuzzy-search sessions are active and CI delivers notifications out of

order.

## Why it was flaky

The wait helpers were keyed only by JSON-RPC method name.

- `wait_for_session_updated` consumed the next

`fuzzyFileSearch/sessionUpdated` notification even when it belonged to a

different search session.

- `wait_for_session_completed` did the same for

`fuzzyFileSearch/sessionCompleted`.

- Once an unmatched notification was read, it was dropped permanently

instead of buffered.

- That meant a valid completion for the target search could arrive

slightly early, be consumed by the wrong waiter, and disappear before

the test started waiting for it.

The result depended on notification ordering and runner scheduling

instead of on the actual product behavior.

## How this PR fixes it

- Add a buffered notification reader in

`codex-rs/app-server/tests/common/mcp_process.rs`.

- Match fuzzy-search notifications on the identifying payload fields

instead of matching only on method name.

- Preserve unmatched notifications in the in-process queue so later

waiters can still consume them.

- Include pending notification methods in timeout failures to make

future diagnosis concrete.

## Why this fix fixes the flakiness

The test now behaves like a real consumer of an out-of-order event

stream: notifications for other sessions stay buffered until the correct

waiter asks for them. Reordering no longer loses the target event, so

the test result is determined by whether the server emitted the right

notifications, not by which one happened to be read first.

Co-authored-by: Ahmed Ibrahim <219906144+aibrahim-oai@users.noreply.github.com>

Co-authored-by: Codex <noreply@openai.com>

## Stack Position

2/4. Built on top of #14828.

## Base

- #14828

## Unblocks

- #14829

- #14827

## Scope

- Port the realtime v2 wire parsing, session, app-server, and

conversation runtime behavior onto the split websocket-method base.

- Branch runtime behavior directly on the current realtime session kind

instead of parser-derived flow flags.

- Keep regression coverage in the existing e2e suites.

---------

Co-authored-by: Codex <noreply@openai.com>

- Added forceRemoteSync to plugin/install and plugin/uninstall.

- With forceRemoteSync=true, we update the remote plugin status first,

then apply the local change only if the backend call succeeds.

- Kept plugin/list(forceRemoteSync=true) as the main recon path, and for

now it treats remote enabled=false as uninstall. We

will eventually migrate to plugin/installed for more precise state

handling.

## Why

Once the repo-local lint exists, `codex-rs` needs to follow the

checked-in convention and CI needs to keep it from drifting. This commit

applies the fallback `/*param*/` style consistently across existing

positional literal call sites without changing those APIs.

The longer-term preference is still to avoid APIs that require comments

by choosing clearer parameter types and call shapes. This PR is

intentionally the mechanical follow-through for the places where the

existing signatures stay in place.

After rebasing onto newer `main`, the rollout also had to cover newly

introduced `tui_app_server` call sites. That made it clear the first cut

of the CI job was too expensive for the common path: it was spending

almost as much time installing `cargo-dylint` and re-testing the lint

crate as a representative test job spends running product tests. The CI

update keeps the full workspace enforcement but trims that extra

overhead from ordinary `codex-rs` PRs.

## What changed

- keep a dedicated `argument_comment_lint` job in `rust-ci`

- mechanically annotate remaining opaque positional literals across

`codex-rs` with exact `/*param*/` comments, including the rebased

`tui_app_server` call sites that now fall under the lint

- keep the checked-in style aligned with the lint policy by using

`/*param*/` and leaving string and char literals uncommented

- cache `cargo-dylint`, `dylint-link`, and the relevant Cargo

registry/git metadata in the lint job

- split changed-path detection so the lint crate's own `cargo test` step

runs only when `tools/argument-comment-lint/*` or `rust-ci.yml` changes

- continue to run the repo wrapper over the `codex-rs` workspace, so

product-code enforcement is unchanged

Most of the code changes in this commit are intentionally mechanical

comment rewrites or insertions driven by the lint itself.

## Verification

- `./tools/argument-comment-lint/run.sh --workspace`

- `cargo test -p codex-tui-app-server -p codex-tui`

- parsed `.github/workflows/rust-ci.yml` locally with PyYAML

---

* -> #14652

* #14651